I work at the intersection of health and immigrant and refugee settlement now. To be completely honest, I thought the healthcare sector was much further along when it comes to digital transformation. Turns out, not really. At least, not in the comprehensive and sectoral way I thought they might be. They're a regulated sector, with strict laws and regulations when it comes to Personal Health Information (PHI), so they have to be even more thoughtful about how they approach tech and people. Other than that, there are as many questions, concerns, discussions, innovations, ideas, curiousity, and uncertainty as anywhere else.

Good and bad, I suppose. You might be wondering, if I write/focus on immigrant and refugee-settlement and tech, why the health-specific focus? I've always said we have much to learn as a sector from other sectors, especially health, social work, and education (the private sector, meh, not so much).

There is a lot to see here about a sector that is and isn't much further ahead of us in some ways. I mean, yeah, I would love an entire massive faculty dedicated to looking at digital transformation and the immigrant and refugee-serving sector, with a practitioner focus (Bridging Divides gives us a wee bit of that but not enough sector focus, IMHO). In the absence of that, seeing where others are going is certainly useful.

So I was excited to watch this reflective webinar from the University of Toronto’s Temerty Centre for AI Research and Education in Medicine (T-CAIREM). It's a monster initiative from one of Canada's largest and most respected universities. It's worth a watch. If only to realize how far we actually haven't come in the past five years since they had their first session.

Watch the recent event here:

and the previous session from 5 years ago here:

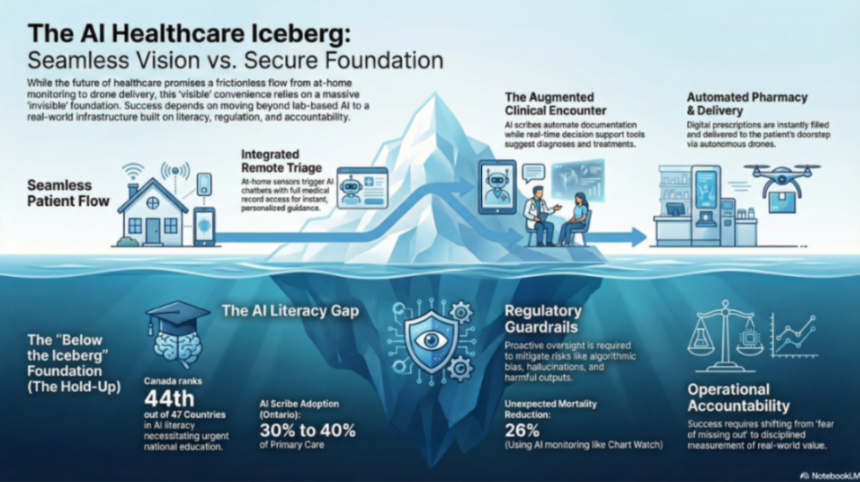

I haven't actually watched that session from 5 years ago, but will (and will probably create a Notebook-generated post exploring the promises from then to practice now, could be interesting). I'm sharing today because of the AI iceberg issue that is touched on, but, upon reflection, not as deeply as I would have liked.

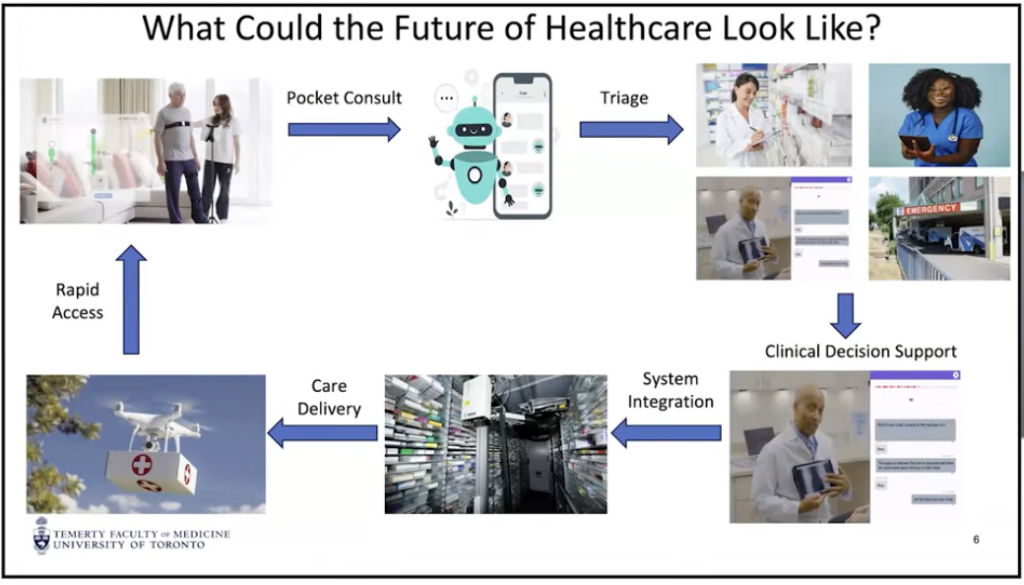

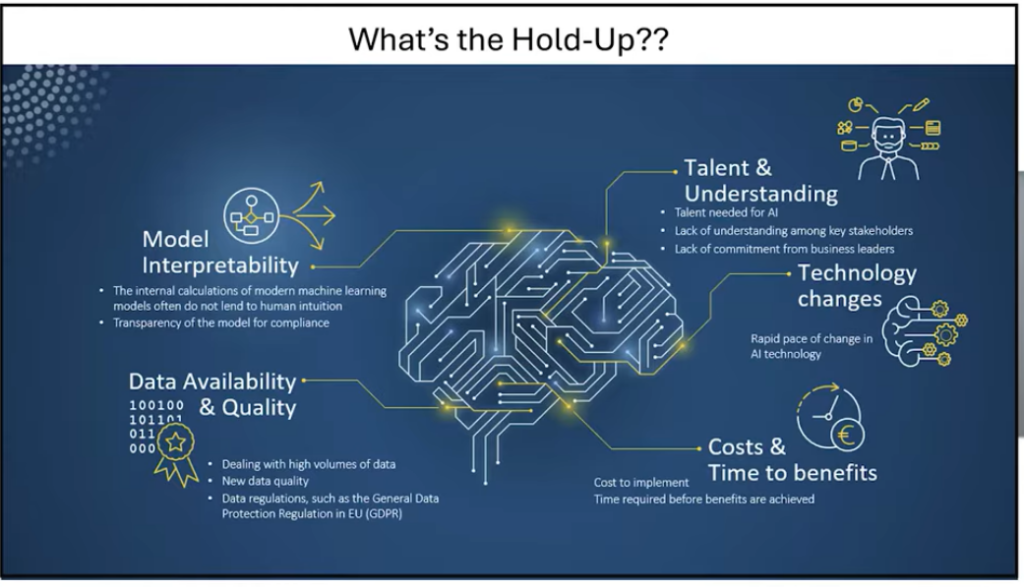

What struck me in the presentation was the aspirational what the future of healthcare from a patient perspective could look like, and even exists today in experiments, but what it takes to actually get there.

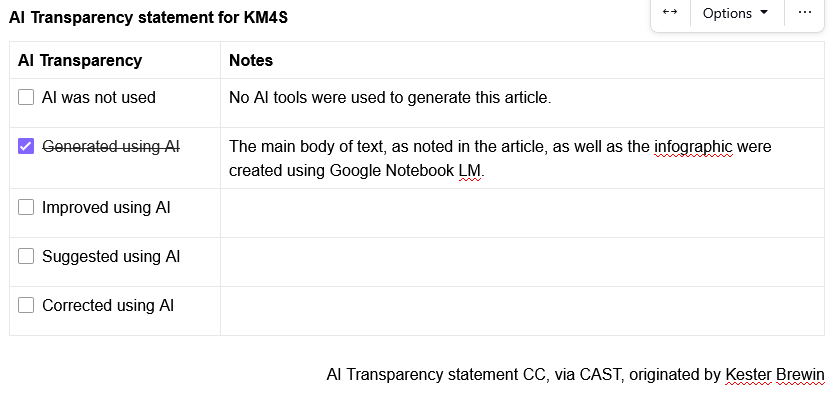

I used Google Notebook LM to do some thinking for me, based on the recent webinar alone. If you're not familiar with Google Notebook LM, you can upload/add sources and it's really good at only citing those sources, and quite accurately with footnotes indicating where in the text/transcript it pulled the information. Much less misinformation (what many call "hallucinations", but that's too feckin' human for a machine) than many chatbots. You can also create infographics, slide decks, blog posts, video overviews, as well as audio overviews (podcast style) of your output. I've previously written about my experimenting with Notebook over here.

Here is what I asked, and the output, with no editing from me.

Analyze the section of the presentation that focuses on "what could the future of healthcare look like?" where the presenter outlines a future flow of how smoothly at home monitored care could look like all the to drone delivery of medication, with the following "what's the hold up" slide. The future of healthcare discussion looks very interesting, but what needs to be in place in human, technological, and regulatory contexts "below the iceberg" for this flow to work securely, confidentially, and confidently.

Here are the two slides from the webinar (you can jump to that specific part of the webinar here).

Here is an infographic I asked Notebook to create from the output below:

(the rest of this text is AI generated, with no edits from me unless noted)

For the futuristic vision of healthcare—ranging from at-home sensors to AI-assisted diagnosis and drone delivery—to function securely and confidently, several foundational elements must be established "below the iceberg." Based on the sources, these requirements span human, technological, and regulatory contexts.

The most significant "bottleneck" to this future is not the technology itself, but society and human acceptance.

The "smooth flow" depends on a robust, invisible architecture that ensures data is both usable and safe.

A secure future requires a "playbook" that balances innovation with public safety.

Would you like me to create a tailored report that expands on these "below the iceberg" requirements into a more formal strategy document for healthcare leaders?

(I said yes and asked for a full tailored report, shared below with no edits)

As we stand at the threshold of a paradigm shift, we must navigate a transition toward a future where healthcare is no longer a series of discrete episodes but a continuous, integrated flow. To operationalize this vision, we are moving toward a target state defined by:

The innovations listed above represent only the visible peak of healthcare transformation. As a strategic imperative, we must focus our investment on the "Below the Iceberg" foundations—the often invisible human, technological, and regulatory infrastructures. Without these sub-surface requirements, the visible "promise" of AI will inevitably collapse into "peril."

Technological sophistication is irrelevant if the workforce lacks the literacy to wield it. We are currently facing a national crisis of readiness; the 2025 KPMG survey ranked Canada 44th out of 47 countries in AI literacy. In contrast, the United Kingdom is already scaling a program to offer free AI literacy resources to all 65 million of its citizens.

To catalyze a national network, we must adopt the blueprint established by the Temerty Centre for AI Research and Education in Medicine (T-CAIREM):

We must transition AI from a "tool" to a collaborative partner in education. At the University of British Columbia (UBC), AI is already being integrated into graduate student study groups, acting as a "virtual student" that pulls up real-time facts and challenges human assumptions. AI serves as:

To build a robust technological foundation, we must define and deploy three distinct AI architectures.

| AI Type | Strategic Definition | Healthcare Application |

| Generative AI | Utilizing billions of data points to generate new text, images, or video. | Summarizing clinical encounters or generating personalized patient education. |

| Agentic AI | AI designed to take autonomous actions based on user preferences. | A system that can autonomously book a travel itinerary to London, selecting flights and hotels based on learned taste. |

| Multimodal AI | Systems that process "senses"—sight, sound, smell, taste, and touch. | Robots that can feel the texture of skin or identify visual changes in wound healing. |

Operationalizing AI requires massive compute power. Strategic partnerships, such as the collaboration between T-CAIREM, Google Cloud, and MIT, are vital. These initiatives provide the "credits" necessary for researchers and students to "play" with machine learning models and real-world datasets for free, removing the financial barriers to innovation.

While Canada remains in the exploratory phase, Japan has already moved to "practice," deploying robots in nursing homes that utilize auditory and tactile sensors to provide care. We must bridge this gap by investing in machines that mimic the five human senses to support home-based care.

The landscape of AI is a balance of "Promise vs. Peril." While a New England Journal of Medicine AI study found chatbots to be as effective as human therapists, and blinded studies show AI empathy scores can be ten-fold higher than those of physicians, the risks are catastrophic.

We have seen the consequences of "hallucinations" and biased algorithms that negatively impact marginalized populations. Most tragic are the cases of AI-encouraged self-harm, such as the Eliza case in Belgium and the recent Character.AI case in Florida.

We advocate for a strategic "Balanced Playbook" that protects against harm without stifling innovation.

We will deploy AI through a phased, risk-managed sequence focused on systemic value.

To avoid the "Wild West" of previous technology rollouts, we must adopt a disciplined, fail-fast approach to ROI:

Humans remain essential for contextualization and social interaction. However, the definition of "menial" tasks will constantly shift upward as AI masters more complex reasoning. We must embrace a symbiotic relationship where AI handles the evolving administrative load, allowing clinicians to focus on the human-centric "touch" that machines cannot replicate.

The path from promise to practice requires us to move beyond high-level governance talk and toward applied operations investment. We must equip our institutions with the physical infrastructure and the cultural literacy required to handle these tools.

The most immediate strategic lever for clinicians and students is the mastery of Prompt Engineering. By learning to provide specific context and clarity in queries, we can dramatically decrease hallucinations and maximize the utility of Generative and Agentic AI. If we remain disciplined, the benefits of this revolution will significantly outweigh the risks. We must act now to ensure Canada is no longer "late to the party," but a leader in the global AI revolution.

Subscribe to get the latest posts sent to your email.

Please take this short survey to help improve the KM4S web site. The survey is anonymous. Thank you for your feedback! (click on the screen anywhere (or on the x in the top right corner) to remove this pop-up)