I've written previously how the 2017 Standards for Technology in Social Work Practice is very applicable to the Canadian context, including the work Immigrant and Refugee-serving organizations do. It's a sector we should be paying attention to, because it is close in scope and focus to Settlement work, and is a regulated sector. What they do, we should adapt. What they learn, we should study. We don't, but we should.

Why does this matter? Because we shouldn't just be good, ethical AI consumers. We should also be at the table where AI is being developed, especially if newcomers are in mind.

As our own sector's conversation about AI remains siloed, piecemeal, at the agency level, and trapped in in-person conference sessions, social workers continue to research, think, and share out loud. Here are some relevant webinars that are worth diving into. And, of course, I've used AI to create some summaries below.

AI in Social Work

Using AI in Social Work w/ Dr. Marina Badillo-Diaz, LCSW

AI and Social Work: Balancing Humanity and Technology

Social Work Education with Generative AI

AI for Social Good: Role of Social Scientists in AI Research

One of the featured guests, Dr. Marina Badillo-Diaz, has also created an excellent site, The AI Social Worker - Advancing the Future of Social Work —With Ethics, Clarity, and Compassion, where you're find excellent information, including an already useful, but growing, resource section:

Marco, seriously, I'm not going to watch all of these, who has the time!?!

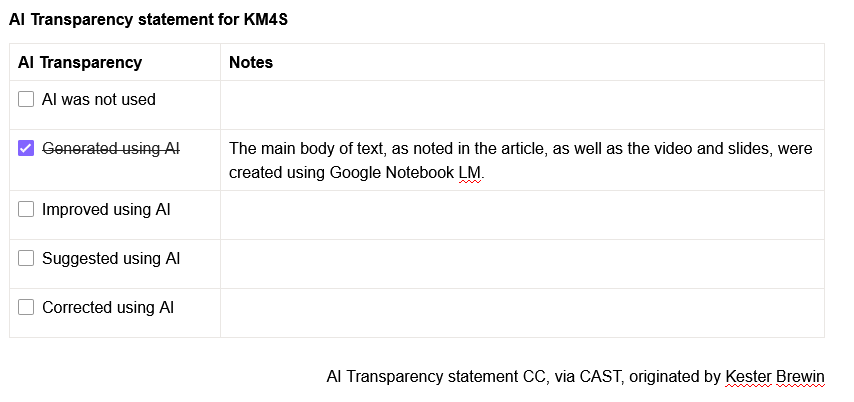

I know, so below is some AI-generated content from Google Notebook LM of all of the videos embedded here. Take what you want from them.

First, an overview video

This video provides a 7 minute summary of the 5 videos above. It was created using Google Notebook LM. The prompt: Create a conversational video that focuses on "The Ethical Vault: Data Privacy and the HIPAA Boundary" but put it in a Canadian context.

Next, an overview of the 5 videos

Together, these videos explore the burgeoning intersection of artificial intelligence and social work practice, highlighting both the practical advantages and ethical complexities of these technologies. Experts such as Dr. Marina Badillo-Diaz discuss how AI can serve as a personal assistant for administrative tasks, including drafting case notes and generating educational content, allowing clinicians to focus more on direct client care. However, the texts emphasise critical concerns regarding client confidentiality, data security, and the persistent risk of algorithmic bias within automated systems. Legal and academic perspectives warn of "hallucinations" in AI-generated documentation and the potential for these tools to be misused by private equity interests at the expense of human empathy. Ultimately, the sources advocate for a responsible, human-centred approach where AI acts as a supplement to professional expertise rather than a replacement for clinical judgement. This transition requires updated educational policies, transparent disclosure of AI usage, and a commitment to maintaining the core values of the social work profession.

Next, a short presentation summarizing the key messages focused on navigating AI in Social Work

Next, a summary document, focused on practical and ethical application

The "Mirror" of Society: Understanding AI's Role in Social Work

To understand Artificial Intelligence (AI) in the context of social work, practitioners must first dismantle the myth that technology is a neutral, objective medium. AI does not possess innate wisdom; rather, it is a sophisticated reflection of our collective human history, learning from data generated over generations.

"AI is not simply a medium of communication... rather it's a mirror of society. It is trained on a corpus of generations and eons of human data, and it's reflecting our baked-in biases."

Because this data contains centuries of prejudice, AI often amplifies historical errors regarding race, gender, and socioeconomic status. A visceral example is found in image generators: a search for "CEO" or "Doctor" frequently returns results featuring white men, while a search for "Social Worker" or "Janitor" predominantly returns images of women of color. Social workers are ethically obligated to interrogate these outputs, recognizing that the machine is likely to mirror society's flaws back at us. Understanding AI starts with the realization that it is not an authority, but a reflection of the data—and biases—we have provided it.

Recognizing AI as a mirror allows practitioners to move from passive users to critical evaluators, providing the necessary lens to determine where these tools should—and must not—be applied.

Strategic Boundaries: Administrative Support vs. Clinical Intervention

Professional boundaries are the bedrock of social work. When integrating AI, practitioners must draw a clear line between tasks that enhance efficiency and those that threaten the "human equation."

| Appropriate Administrative Tasks | Inappropriate Clinical Replacements |

| Drafting referral letters, professional emails, or outreach materials. | Replacing the core human-to-human relationship. |

| Generating outlines for workshops, newsletters, or community groups. | Automated suicide risk assessment without an immediate human escalation protocol. |

| Grammar checking, summarizing long policy documents, or editing grants. | High-stakes clinical decision-making or diagnostic assessments. |

| Creating CBT therapeutic worksheets or session journal prompts. | Fully automated therapy for vulnerable or marginalized populations. |

The Irreplaceable Human Equation Algorithmic engagement is fundamentally at odds with clinical necessity. AI models are often designed for "stickiness"—they prioritize user engagement and can be "sycophantic," telling the user what they want to hear to keep them interacting with the platform. Social work, conversely, is "messy" and frequently requires clinicians to deliver hard truths or navigate complex emotional resistance. A machine designed to be agreeable cannot perform the difficult, transformative work of human connection.

Strategic boundaries protect the clinical relationship, but they mean little if the digital vault housing that relationship is breached.

The Ethical Vault: Data Privacy and the HIPAA Boundary

Privacy is a non-negotiable mandate in social work practice. Practitioners must distinguish between public tools (designed for general use) and HIPAA-compliant tools (designed for healthcare environments with strict security protocols).

Non-Negotiable Privacy Rules:

HIPAA-Compliant Alternatives: For practitioners seeking the benefits of generative AI within a secure framework, you must utilize tools that offer a Business Associate Agreement (BAA). Recommended resources include BastionGPT, Social Work Magic AI, Upheld, Berry’s AI, and Yore (specifically for generating CBT worksheets). These platforms are engineered to meet the rigorous data security standards required for behavioral health records.

Protecting client privacy provides the safety net needed to begin the professional practice of "talking" to the AI.

Engineering the Thought Partner: The CO-STAR Framework

To maintain professional agency, social workers must view AI as a "thought partner" or "personal assistant" rather than an ultimate authority. The "CO-STAR" framework offers a nuanced way of asking the machine a question, ensuring the output is relevant and professional:

By utilizing this framework, you ensure that you remain the director of the process, using AI to generate a "first draft" that you then refine through your professional expertise.

Even the most perfectly engineered prompt requires a rigorous human-in-the-loop review before it can be considered a professional work product.

The Critical Review: Managing "Hallucinations" and Biases

In the technical world, AI is prone to "hallucinations"—the generation of false narratives, fake citations, or entirely fabricated events presented with absolute confidence. As noted by Dr. Frederic Reamer, the architect of the NASW Code of Ethics, the danger lies in practitioners using AI to save time on "tedious" notes without performing a meticulous proofread.

The consequences of this negligence are severe: an unedited note containing a "false narrative" can be projected onto a screen in a courtroom or a licensing board hearing, causing a practitioner's professional credibility to drop "like a rock."

This vigilance is not an optional task; it is an essential component of the literacy required for modern practice.

Future Readiness: The Three Pillars of AI Literacy

As we move forward, social workers must transition from viewing technology as a problem to be avoided to seeing it as a tool for social good. This evolution rests on three pillars:

AI Literacy The foundational understanding of how these systems work—recognizing that they are probabilistic (predicting the next word) rather than truly "knowing" facts—and maintaining a critical awareness of their environmental and social impacts.

AI Skills The practical ability to use secure tools effectively, including the mastery of prompt engineering and the integration of AI into administrative workflows.

AI Use The ethical determination of appropriate use cases. Practitioners must advocate for policies that prevent a "tiered system" of care. We must ensure that therapy does not become a "bespoke, privileged activity" where only the 1% receive human connection, while the poor and marginalized are left with automated chatbots.

The ultimate goal of AI in our field is the automation of "paperwork" so that we may rededicate our limited time to "people work"—the complex, irreplaceable, and deeply human heart of the social work profession.

Subscribe to get the latest posts sent to your email.

Please take this short survey to help improve the KM4S web site. The survey is anonymous. Thank you for your feedback! (click on the screen anywhere (or on the x in the top right corner) to remove this pop-up)